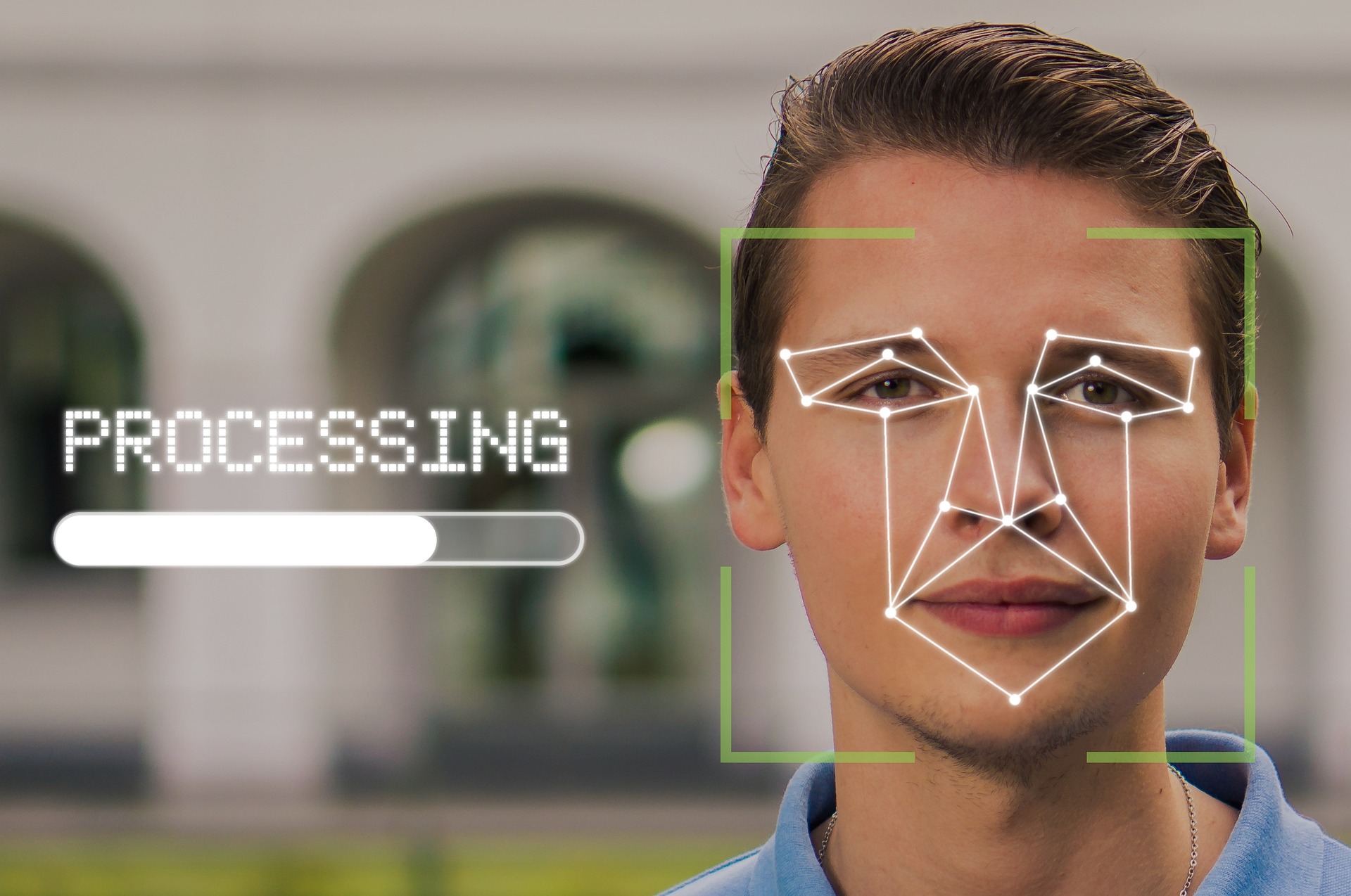

A big biometric security company in the UK, Facewatch, is in hot water after their facial recognition system caused a major snafu - the system wrongly identified a 19-year-old girl as a shoplifter.

Not the first time facial recognition tech has been misused, and certainly won’t be the last. The UK in particular has caught a lotta flak around this.

We seem to have a hard time connecting the digital world to the physical world and realizing just how interwoven they are at this point.

Therefore, I made an open source website called idcaboutprivacy to demonstrate the importance—and dangers—of tech like this.

It’s a list of news articles that demonstrate real-life situations where people are impacted.

If you wanna contribute to the project, please do. I made it simple enough to where you don’t need to know Git or anything advanced to contribute to it. (I don’t even really know Git.)

I wish I could find an English source about the guy who got woken by police assaulting him in his bed because he’d sent private sexy photos of him and his boyfriend via Yahoo mail. It’s definitely one of the things that “radicalised” me.

I’ll link your site on my personal website, which has a link collection. Seems cool.

Nice, thanks. Your site is really clean. Dig it.

What a great idea for a page. People are becoming blase about privacy even though it’s still important.

Glad you like it.

And yeah, it’s foundational. We tolerate things digitally that we’d never tolerate in person.

Once I start connecting and analogizing digital to physical concepts in a conversation, it appears to “click” in their heads and they end up saying something along the lines of, “You’re right. It makes sense.”

Hence this project. I hope people can use this website and link it to people who need it to understand how this affects us all—now, not in the future.

From your webpage: Privacy because protects our freedom to be who we are.

I think a word is missing in that sentence.

Fixed it, thanks for flagging

They accidentally the whole word.

Lol it was the other way around… I actually added a word instead. Fixed

Tap for spoiler

it

now.

Please tell me a lawyer is taking this on pro bono and is about to sue the shit out of Facewatch.

For what? A private business can exclude anyone for any reason or no reason at all so long as the reason isn’t a protected right.

I’d be surprised if being born with a specific face configuration isn’t protected in the same way that race and gender are.

In the uk you can pet much guarantee that won’t happen because it would shut down their surveillance state.

deleted by creator

Even if she were the shoplifter, how would that work? “Sorry mate, you shoplifted when you were 16, now you can never buy food again.”?

Sounds like a VAC ban.

Stop giving corporations the power to blacklist us from life itself.

you will sit down and be quiet, all you parasites stifling innovation, the market will solve this, because it is the most rational thing in existence, like trains, oh god how I love trains, I want to be f***ed by trains.

~~Rand

I can see the Invisible Hand of the Free Market, it’s giving me the finger.

Can it give invisible hand jobs?

Yes, but they’re in the “If you have to ask, you couldn’t afford it in three lifetimes.” price range

Darn!

Right up your ass, no less.

It charged me for lube, and I thought about paying for it, but same-day shipping was a bitch and a half… I tried second class mail, but I think my bumhole would have stretched enough for this to stop hurting before it got anywhere near close to here so I just opted for that.

Are you suggesting they shouldn’t be allowed to ban people from stores? The only problem I see here is misused tech. If they can’t verify the person, they shouldn’t be allowed to use the tech.

I do think there need to be reprocussions for situations like this.

Well there should be a limited amount of ability to do so. I mean there should be police reports or something at the very least. I mean, what if Facial Recognition AI catches on in grocery stores? Is this woman just banned from all grocery stores now? How the fuck is she going to eat?

That’s why I said this was a misuse of tech. Because that’s extremely problematic. But there’s nothing to stop these same corps from doing this to a person even if the tech isn’t used. This tech just makes it easier to fuck up.

I’m against the use of this tech to begin with but I’m having a hard time figuring out if people are more upset about the use of the tech or about the person being banned from a lot of stores because of it. Cause they are separate problems and the latter seems more of an issue than the former. But it also makes fucking up the former matter a lot more as a result.

I wish I could remember where I saw it, but years ago I read something in relation to policing that said a certain amount of human inefficiency in a process is actually a good thing to help balance bias and over reach that could occur when technology could technically do in seconds what would take a human days or months.

In this case if a person is enough of a problem that their face becomes known at certain branches of a store it’s entirely reasonable for that store to post a sign with their face saying they are aren’t allowed. In my mind it would essentially create a certain equilibrium in terms of consequences and results. In addition to getting in trouble for stealing itself, that individual person also has a certain amount of hardship placed on them that may require they travel 40 minutes to do their shopping instead of 5 minutes to the store nearby. A sign and people’s memory also aren’t permanent, so it’s likely that after a certain amount of time that person would probably be able to go back to that store if they had actually grown out of it.

Or something to that effect. If they steal so much that they become known to the legal system there should be processes in place to address it.

And even with all that said, I’m just not that concerned with theft at large corporate retailers considering wage theft dwarfs thefts by individuals by at least an order of magnitude.

Well, this blows the “if you’ve not done anything wrong, you have nothing to worry about” argument out of the water.

That argument was only ever made by dumb fucks or evil fucks. The article reports about an actual occurrence of one of the problems of such technology that we (people who care about privacy) have warned about from the beginning.

the way I like to respond to that:

“ok, pull down your pants and hand me your unlocked phone”

I’m stealing this.

Death to the worthless corpo world that allowed this bullshit in the first place! Towards anarchist communism and social revolution!

Please grow and change as a person

There we go guys. It’s funny when nutty conspiracy theorists are against masks when they should be wearing frikin balaclavas

Despite concerns about accuracy and potential misuse, facial recognition technology seems poised for a surge in popularity. California-based restaurant CaliExpress by Flippy now allows customers to pay for their meals with a simple scan of their face, showcasing the potential of facial payment technology.

Oh boy, I can’t wait to be charged for someone else’s meal because they look just enough like me to trigger a payment.

I have an identical twin. This stuff is going to cause so many issues even if it worked perfectly.

Sudden resurgence of the movie “Face Off”

If it works anything like Apple’s Face ID twins don’t actually map all that similar. In the general population the probability of matching mapping of the underlying facial structure is approximately 1:1,000,000. It is slightly higher for identical twins and then higher again for prepubescent identical twins.

And yet this woman was mistaken for a 19-year-old 🤔

Shitty implementation doesn’t mean shitty concept, you’d think a site full of tech nerds would understand such a basic concept.

Pretty much everyone here agrees that it’s a shitty concept. Doesn’t solve anything and it’s a privacy nightmare.

Well I guess we’re lucky that no one on Lemmy has any power in society.

Meaning, 8’000 potential false positives per user globally. About 300 in US, 80 in Germany, 7 in Switzerland.

Might be enough for Iceland.

Yeah, which is a really good number and allows for near complete elimination of false matches along this vector.

I promise bro it’ll only starve like 400 people please bro I need this

Who’s getting starved because of this technology?

A single mum with no support network who can’t walk into any store without getting physically ejected, maybe?

You’re perfectly OK with 8000 people worldwide being able to charge you for their meals?

No you misunderstood. That is a reduction in commonality by a literal factor of one million. Any secondary verification point is sufficient to reduce the false positive rate to effectively zero.

secondary verification point

Like, running a card sized piece of plastic across a reader?

It’d be nice if they were implementing this to combat credit card fraud or something similar, but that’s not how this is being deployed.

Which means the face recognition was never necessary. It’s a way for companies to build a database that will eventually get exploited. 100% guarantee.

Ok, some context here from someone who built and worked with this kind tech for a while.

Twins are no issue. I’m not even joking, we tried for multiple months in a live test environment to get the system to trip over itself, but it just wouldn’t. Each twin was detected perfectly every time. In fact, I myself could only tell them apart by their clothes. They had very different styles.

The reality with this tech is that, just like everything else, it can’t be perfect (at least not yet). For all the false detections you hear about, there have been millions upon millions of correct ones.

it can’t be perfect (at least not yet).

Or ever, because it locks you out after a drunken night otherwise.

Or ever because there is no such thing as 100% in reality. You can only add more digits at the end of your accuracy, but it will never reach 100.

Twins are no issue. Random ass person however is. Lol

Yes, because like I said, nothing is ever perfect. There can always be a billion little things affecting each and every detection.

A better statement would be “only one false detection out of 10 million”

You want to know a better system?

What if each person had some kind of physical passkey that linked them to their money, and they used that to pay for food?

We could even have a bunch of security put around this passkey that makes it’s really easy to disable it if it gets lost or stolen.

As for shoplifting, what if we had some kind of societal system that levied punishments against people by providing a place where the victim and accused can show evidence for and against the infraction, and an impartial pool of people decides if they need to be punished or not.

Another way to look at that is ~810 people having an issue with a different 810 people every single day assuming only one scan per day. That’s 891,000 people having a huge fucking problem at least once every single year.

I have this problem with my face in the TSA pre and passport system and every time I fly it gets worse because their confidence it is correct keeps going up and their trust in my actual fucking ID keeps going down

I have this problem with my face in the TSA pre and passport system

Interesting. Can you elaborate on this?

Edit: downvotes for asking an honest question. People are dumb

And a lot of these face recognition systems are notoriously bad with dark skin tones.

Even if someone did steal a mars-bar… Banning them from all food-selling establishments seems… Disproportional.

Like if you steal out of necessity, and get caught once, you then just starve?

Obviously not all grocers/chains/restaurants are that networked yet, but are we gonna get to a point where hungry people are turned away at every business that provides food, once they are on “the list”?

No no, that would be absurd. You’ll also be turned away if you are not on the list if you’re unlucky.

They’ve essentially created their own privatized law enforcement system. They aren’t allowed to enforce their rules the same way a government would be, but punishment like banning a person from huge swaths of economic life can still be severe. The worst part is that private legal systems almost never have any concept of rights or due process, so there is absolutely nothing stopping them from being completely arbitrary in how they apply their punishments.

I see this kind of thing as being closely aligned with right wingers’ desire to privatize everything, abolish human rights, and just generally turn the world into a dystopian hellscape for anyone who isn’t rich and well connected.

I can see this being used against ex-employees.

We have so many dystopian futures and we decided to invent a new one.

The developers should be looking at jail time as they falsely accused someone of commiting a crime. This should be treated exactly like if I SWATed someone.

I get your point but totally disagree this is the same as SWATing. People can die from that. While this is bad, she was excluded from stores, not murdered

You lack imagination. What happens when the system mistakenly identifies someone as a violent offender and they get tackled by a bunch of cops, likely resulting in bodily injury.

This happens in the USA without face recognition

That would then be an entirely different situation?

I mean, the article points out that the lady in the headline isn’t the only one who has been affected; A dude was falsely detained by cops after they parked a facial recognition van on a street corner, and grabbed anyone who was flagged.

No, it wouldn’t be. The base circumstance is the same, the software misidentifying a subject. The severity and context will vary from incident to incident, but the root cause is the same - false positives.

There’s no process in place to prevent something like this going very very bad. It’s random chance that this time was just a false positive for theft. Until there’s some legislative obligation (such as legal liability) in place to force the company to create procedures and processes for identifying and reviewing false positives, then it’s only a matter of time before someone gets hurt.

You don’t wait for someone to die before you start implementing safety nets. Or rather, you shouldn’t.

That’s not very reassuring, we’re still only one computer bug away from that situation.

Presumably she wasn’t identified as a violent criminal because the facial recognition system didn’t associate her duplicate with that particular crime. The system would be capable of associating any set of crimes with a face. It’s not like you get a whole new face for each different possible crime. So, we’re still one computer bug away from seeing that outcome.

In the UK at least a SWATing would be many many times more deadly and violent than a normal police interaction. Can’t make the same argument for the USA or Russia, though.

I’m not so sure the blame should solely be placed on the developers - unless you’re using that term colloquially.

Developers were probably the first people to say that it isn’t ready. Blame the sales people that will say anything for money.

It’s impossible to have a 0% false positive rate, it will never be ready and innocent people will always be affected. The only way to have a 0% false positive rate is with the following algorithm:

def is_shoplifter(face_scan):

return False

Oh good… janky oversold systems that do a lot of automation on a very shaky basis are also having high impacts when screwing up.

Also “Facewatch” is such an awful sounding company.

This is why some UK leaders wanted out of EU, to make their own rules with way less regard for civil rights.

It’s the Tory way. Authoritarianism, culture wars, fucking over society’s poorest.

nah i think main thing was a super fragile identity. i mean they have been shit all the time since before EU. when talks between france,germany and uk took place the insisted to take control of EU.

if you live on an island for generations with limited new genetic input…well, thats where you end up.

We humans have these things called “boats” that have enabled the British Isles to receive regular inputs of new genetic material. Pretty useful things, these boats, and somewhat pivotal in the history of the UK.

sure

This can’t be true. I was told that if she has nothing to hide she has nothing to worry about!

Is it even legal? What happened to consumer protection laws?

Brexit. The EU has laws forbidding stuff like this.