Intellectual property is fake lmao. Train your AI on whatever you want

deleted by creator

You are allowed to use copyrighted content for training. I recommend reading this article by Kit Walsh, a senior staff attorney at the EFF if you haven’t already. The EFF is a digital rights group who most recently won a historic case: border guards now need a warrant to search your phone.

deleted by creator

I agree.

The comparision doesn’t work. Because the AI is replacing the pencil or other drawing tool. And we aren’t saying pencil companies are selling you Mario pics because you can draw a Mario picture with a pencil either. Just because the process of how the drawing is made differs, doesn’t change the concept behind it.

An AI tool that advertises Mario pcitures would break copyright/trademark laws and hear from Nintendo quickly.

I don’t buy the pencil comparison. If I have a painting in my basement that has a distinctive style, but has never been digitized and trained upon, I’d wager you wouldn’t be able to recreate neither that image nor it’s style. What gives? Because AI is not a pencil but more like a data mixer you throw complete works in into and it spews out colllages. Maybe collages of very finely shredded pieces, to the point you could even tell, but pieces of original works nontheless. If you put any non-free works in it, they definitely contaminate the output, and so the act of putting them in in the first place should be a copyright violation in itself. The same as if I were to show you the image in question and you decided to recreate it, I can sue you and I will win.

deleted by creator

I don’t think how you interact with a tool matters. Typing what you want, drawing it yourself, or clicking through options is all the same. There are even other programs that allow you to draw by typing. They are way more difficult but again, I don’t think the difficulty matters.

There are other tools that allow you to recreate copyrighted material fairly easily. Character creators being on the top of the list. Games like Sims are well known for having tons of Sims that are characters from copyrighted IP. Everyone can recreate Barbie or any Disney Princess in the Sims. Heck, you can even download pre made characters on the official mod site. Yet we aren’t calling out the Sims for selling these characters. Because it doesn’t make sense.

deleted by creator

The problem is that you might technically be allowed to, but that doesn’t mean you have the funds to fight every court case from someone insisting that you can’t or shouldn’t be allowed to. There are some very deep pockets on both sides of this.

Let them come.

I like your moxy, soldier

That’s not it going both ways. You shouldn’t be allowed to use anyone’s IP against the copyright holders wishes. Regardless of size.

deleted by creator

No, it’s about if you make a game that’s worse or off-brand. If you make a bunch of Mario games into horror games and then everyone thinks of horror when they think of Mario then good or bad, you’ve ruined their branding and image. Equally, if you make a bunch of trash and people see Mario as just a trash franchise (like how most people see Sonic games) then it ruins Nintendo’s ability to capitalize on their own work.

No one is worried about a fan-made Mario game being better.

deleted by creator

Actually we do. If your game is so terrible, it can get removed from the Google Play store. It has to be really bad and they rarely do it. Steam does this as well. Epic avoids this by vetting the games they put on their platform site first. It’s why the term asset flip exists.

deleted by creator

No. Fuck that. I don’t consent to my art or face being used to train AI. This is not about intellectual property, I feel my privacy violated and my efforts shat on.

Unless you have been locked in a sensory deprivation tank for your whole life, and have independently developed the English language, you too have learned from other people’s content.

Well my knowledge can’t be used as a tool of surveillance by the government and the corporations and I have my own feelings intent and everything in between. AI is artifical inteligence, Ai is not an artificial person. AI doesn’t have thoughts, feelings or ideals. AI is a tool, an empty shell that is used to justify stealing data and survelience.

This very comment is a resource that government and corporations can use for surveillance and training.

AI doesn’t have thoughts? We don’t even know what a thought is.

We may not know what comprises A thought, but I think we know it’s not matrix math. Which is basically all an LLM is

Yet we live in a world where people will profit from the work and creativity of others without paying any of it back to the creator. Creating something is work, and we don’t live in a post-scarcity communist utopia. The issue is the “little guy” always getting fucked over in a system that’s pay-to-play.

Donating effort to the greater good of society is commendable, but people also deserve to be compensated for their work. Devaluing the labor of small creators is scummy.

I’m working on a tabletop setting inspired by the media I consumed. If I choose to sell it, I’ll be damned if I’m going to pay royalties to the publishers of every piece of media that inspired me.

If you were a robot that never needed to eat or sleep and could generate 10,000 tabletop RPGs an hour with little to no creative input then I might be worried about whether or not those media creators were compensated.

Then don’t post your art or face publicly, I agree with you if it’s obtained through malicious ways, but if you post it publicly than expect it to be used publicly

If you post your art publicly why should it be legal for Amazon to take it and sell it? You are deluding yourself if you believe AI having a get out of jail free card on IP infringement won’t be just one more source of exploitation for corporations.

If the large corporations can use IP to crush artists, artists might as well try to milk every cent they can from their labor. I dislike IP laws as well, and you can never use the masters’ tools to dismantle their house, but you can sure as shit do damage and get money for yourself.

Luckily, AI aren’t the master’s tools, they’re a public technology. That’s why they’re already trying their had at regulatory capture. Just like they’re trying to destroy encryption. Support open source development, It’s our only chance. Their AI will never work for us. John Carmack put it best.

“Artists don’t deserve to profit off their own work” is stupid as shit. Complain about copyright abuse and lobbying a la Disney and I’ll be right there with you, but people shouldn’t have the right to take your work and profit off it without either your consent or paying you for it.

Artists and other creatives who actually do work to create art (not shitting out text into an image generator) should take every priority over AI “creators.”

Based take.

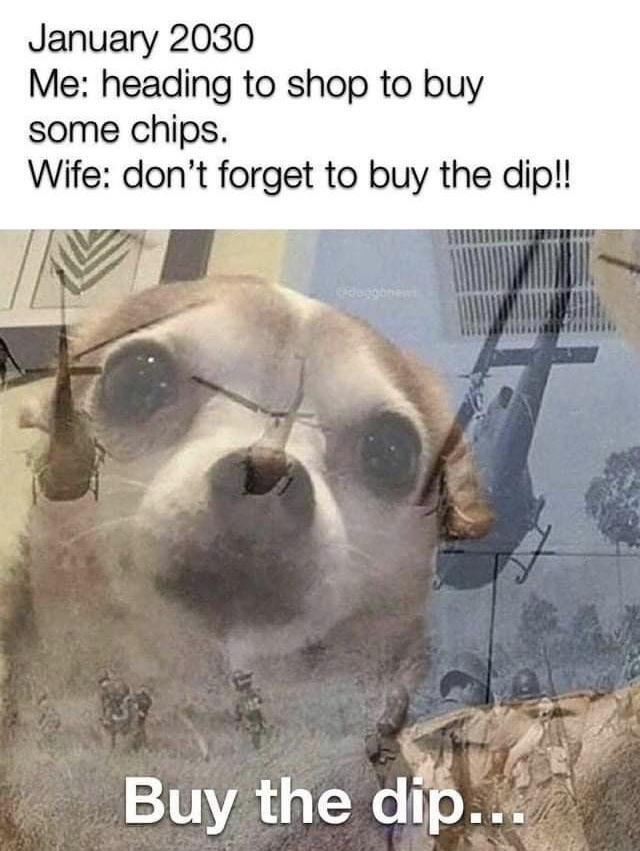

Me, literally training a neutral net to generate pictures of carrot cakes right now now:

WHERE DID YOU GET THE DATA?

AI CEOs be like

Online Communism 😃

Real Life Communism 😠

Communism for fascists and fascism for the commons. It’s the american way.

Annoying and aggressive about it to the point where you’d like to wring their necks? Yeah, that’s exactly what that’s like.

For Stable Diffusion, it comes from images on Common Crawl through the LAION 5b dataset.

I feel the current AI crawling bots + “opt-out your data” tactic is ingeniously evil.

it’s funny, but still, where did you get the data? XD

It’s hilarious really

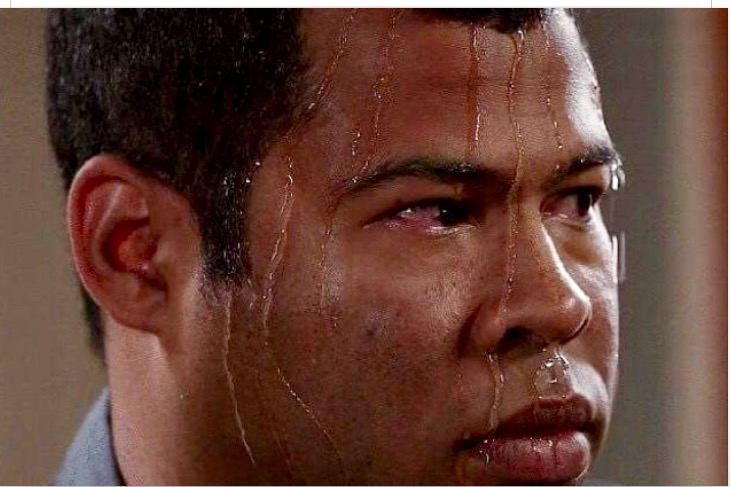

Companies have been stealing data for so long, and then another company comes and steals their data by scraping it they go surprised Pikachu

From Reddit comments.

The best part is the end result, not where the data comes from. Tired of hearing about AI models “stealing” data. I put all my art, designs and code online and assume it’ll be used to train models (which I’ll be able to use later on)

I interpreted this less as being about art models and more about predictive models trained off of data with racial prejudice (i.e. crime prediction models trained off of old police data)

You’re putting your stuff out there so some cryptobro cracker can steal it, claim credit for it, and then call you a peasant for helping him make it.

It’s sort of an accelerationistic take on broken IP law I’m sure of it.

Never forget: businesses do not own data about you. The data belongs to the data subject, businesses merely claim a licence to use it.

found it

One thing I’ve started to think about for some reason is the problem of using AI to detect child porn. In order to create such a model, you need actual child porn to train it on, which raises a lot of ethical questions.

Cloudflare says they trained a model on non-cp first and worked with the government to train on data that no human eyes see.

It’s concerning there’s just a cache of cp existing on a government server, but it is for identifying and tracking down victims and assailants, so the area could not be more grey. It is the greyest grey that exists. It is more grey than #808080.

well, many governments had no issue taking over a cp website and hosting it for montha to come, using it as a honeypot. Still they hosted and distributed cp, possibly to thousands of unknown customers who can redistribute it.

You absolutely do not real CSAM in the dataset for an AI to detect it.

It’s pretty genius actually: just like you can make the AI create an image with prompts, you can get prompts from an existing image.

An AI detecting CSAM would have to be trained on nudity and on children separately. If an image-to-prompts conversion results in “children” AND “nudity”, it is very likely the image was of a naked child.

This has a high false positive rate, because non-sexual nude images of children, which quite a few parents have (like images of their child bathing) would be flagged by this AI. However, the false negative rate is incredibly low.

It therefore suffices for an upload filter for social media but not for reporting to law enforcement.

This dude isn’t even whining about the false positives, they’re complaining that it would require a repository of CP to train the model. Which yes, some are certainly being trained with the real deal. But with law enforcement and tech companies already having massive amounts of CP for legal reasons, why the fuck is there even an issue with having an AI do something with it? We already have to train mods on what CP looks like, there is no reason its more moral to put a human through this than a machine.

This is a stupid comment trying to hide as philosophical. If your website is based in the US (like 80 percent of the internet is), you are REQUIRED to keep any CSAM uploaded to your website and report it. Otherwise, you’re deleting evidence. So all these websites ALREADY HAVE giant databases of child porn. We learned this when Lemmy was getting overran with CP and DB0 made a tool to find it. This is essentially just using shit any legally operating website would already have around the office, and having a computer handle it instead of a human who could be traumatized or turned on by the material. Are websites better for just keeping a database of CP and doing nothing but reporting it to cops who do nothing?

Yeah, a real fuckin moral quandary there, I bet this is the question that killed Kant.

I’m pretty sure those AI models are trained on hashes of the material, not the material directly, so all you need to do is save a hash of the offending material in the database any time that type of material is seized

That wouldn’t be ai though? That would just be looking up hashes.

Nah, flipping the image would completely bypass a simple hash map

From my very limited understanding it’s some special hash function that’s still irreversible but correlates more closely with the material in question, so an AI trained on those hashes would be able to detect similar images because they’d have similar hashes, I think

could you provide a source for this? that spunds very counterintuive and bad for the hash functions. especially as the whole point of AI training in this case is detecting new images. And say a small boy at the beach wearing speedos has a lot of similiarity to a naked boy. So looking by some resemblance in the hash function would require the hashes to practically be reversible.

I’m no expert, but we use those kind of hashes at my company to detect fraudulent software Here’s a Wikipedia link: https://en.m.wikipedia.org/wiki/Locality-sensitive_hashing

deleted by creator

All damn time as a project committee.